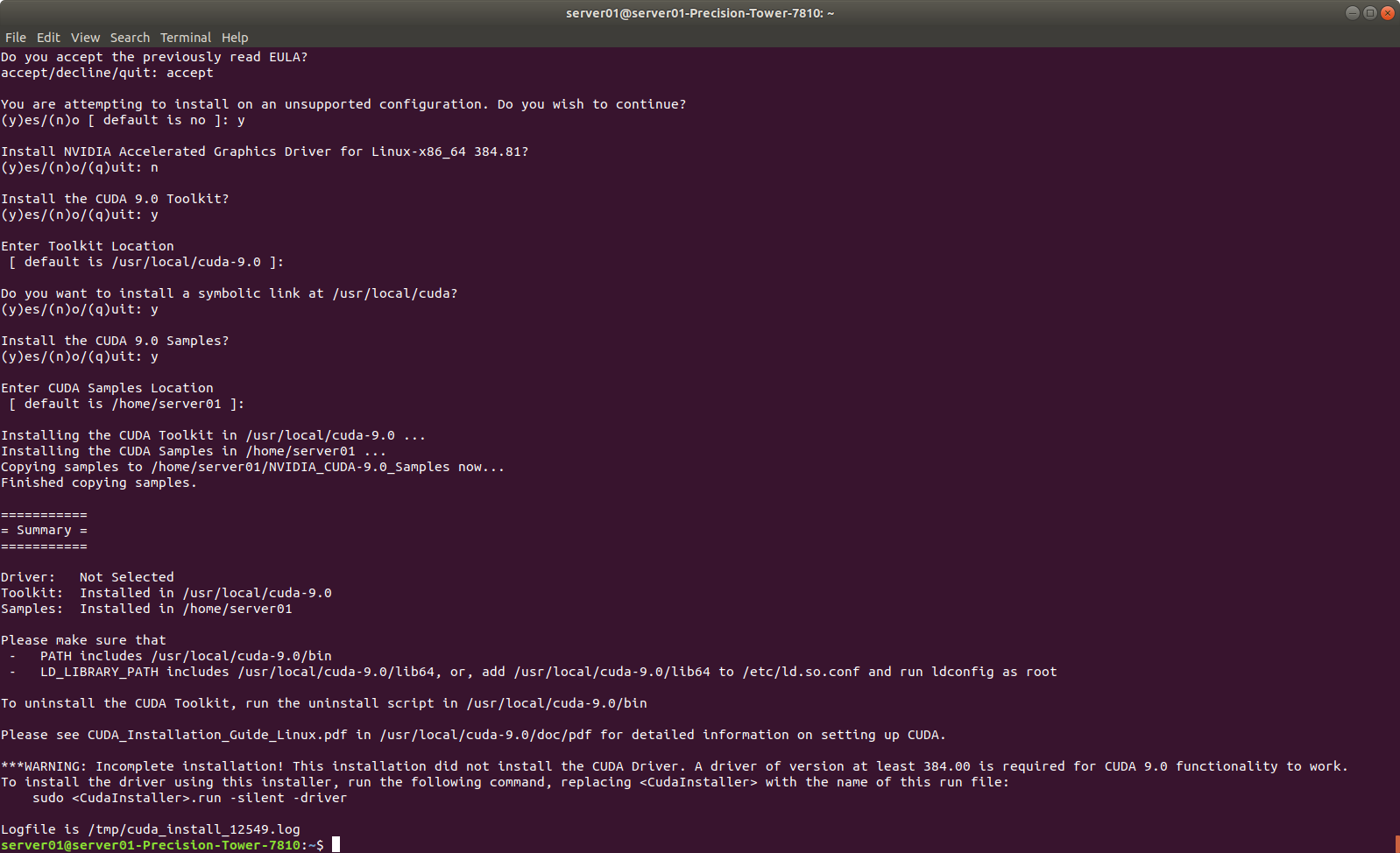

Pascal, NVIDIA Volta™, NVIDIA Turing™, NVIDIA AmpereĪrchitecture, NVIDIA Ada Lovelace architecture, and NVIDIA Hopper™ TensorRT also includes optional high speed mixed precision capabilities with the NVIDIA TensorRT also supplies a runtime that you can use to execute this network onĪll of NVIDIA’s GPU’s from the NVIDIA Pascal™ generation onwards. Implementation of that model leveraging a diverse collection of highly optimized Optimizations, layer fusions, among other optimizations, while also finding the fastest TensorRT to optimize and run them on an NVIDIA GPU. The Network Definition API or load a pre-defined model via the parsers that allow TensorRT provides APIs via C++ and Python that help to express deep learning models via Trained parameters, and produces a highly optimized runtime engine that performs TensorRT takes a trained network, which consists of a network definition and a set of That facilitates high-performance inference on NVIDIA graphics processing units (GPUs). I did NOT test it for any other versions than 20.04, but it should work for 18.04 to 21.The core of NVIDIA ® TensorRT™ is a C++ library Opt out of installation of nvidia drivers for cuda installation and install drivers from here:Īlso check if driver is compatible for your model! (in general that should be the case) sudo sh 'NVIDIA-Linux-x86_64-465.19.01.run' IMPORTANT if you need 32bit support - there are several applications only running with 32-bit drivers (like steam) This involves updating the PATH and environment variables: export PATH=/usr/local/cuda-11.3/bin$Įxport LD_LIBRARY_PATH=/usr/local/cuda-11.3/lib64\ Then (if not already done) disable nouveau as described here:įollow the post-installation instructions found on the CUDA Toolkit Installation Guide for Linux. Since all of the explanations i found so far were not satisfying, here are the steps i came up with to install the latest nvidia driver (465) with cuda 11.3įirst you have to uninstall all cuda and nvidia related drivers and packages sudo apt-get purge nvidia-* using high performance kernel compute_gemm_imma You should see the following or similar output: M: 4096 (16 x 256)Ĭomputing. bin/x86_64/linux/release/immaTensorCoreGemm If the compilation was succesful, you can try out one of the samples. Specify the architecture version when running make, e.g.For the Quadro RTX 3000, it is "turing", version 7.5. Next google your GPU to find out the corresponding compute architecture.You can find out your GPU by running nvidia-smi.In order to help the build process a little, it might be advisable to specify the compute architecture of your GPU. some required dependencies are not installed. If just running "make" does not work for you, carefully read the error messages and see whether e.g. cmake), but ships a plain old Makefile instead. Ubuntu does not package them as part of "nvidia-cuda-toolkit" but we can download them directly from NVIDIA's github page: wget įor whatever reason, NVIDIA did not chose to include a modern build system (e.g. One of the best way to verify whether CUDA is properly installed is using the official "CUDA-sample". Test the CUDA toolkit installation /configuration Should indicate that you have CUDA 11.1 installed.

Now your CUDA installation should be complete, and nvidia-smi Add this export CUDA_PATH=/usrĪt the end of your. Next we can install the CUDA toolkit: sudo apt install nvidia-cuda-toolkit

This should contain the following or similar: Next we can verify whether the drive was succesfully installed: nvidia-smi Next, let's install the latest driver: sudo apt install nvidia-driver-455Īfter this, we need to restart the computer to finalize the driver installation.

This might be an optional step, but it is always good to first remove potential previously installed NVIDIA drivers: sudo apt-get purge *nvidia*

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed